Why Frances Haugen Is ‘Super Scared’ About Facebook’s Metaverse

A version of this article was published in TIME’s newsletter Into the Metaverse. Get a Weekly Guide to the Future of the Internet.

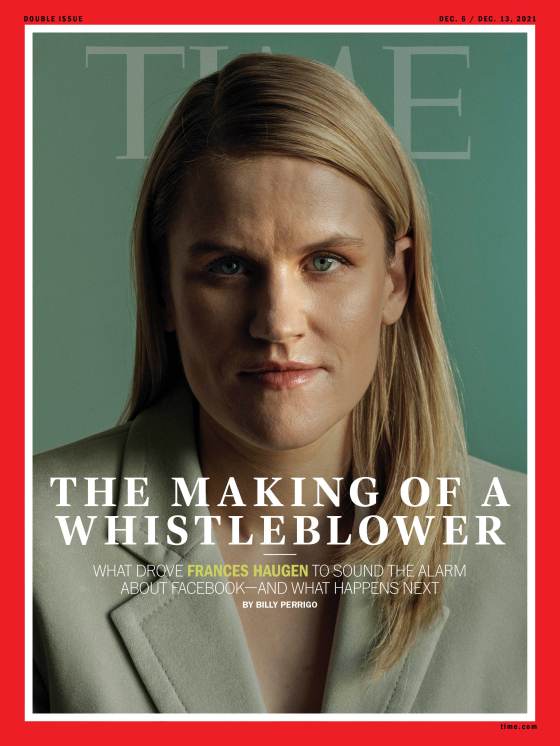

Billy Perrigo is a friend and colleague who has been writing for many years about Big Tech’s perils: How it spreads False information, Fosters extremist networks around the worldAnd even moreDemocracy at Risk. Billy was in Paris for an interview with Frances Haugen (Facebook whistleblower) last month. Cover story in our December 6 issue. He talked to Haugen about a wide range of topics, including Facebook’s version of the metaverse—but that part of the conversation didn’t make it into the issue. So this week, I’m handing the newsletter over to Billy for more on his conversation with Haugen. —Andrew R. Chow

[time-brightcove not-tgx=”true”]

——

In an extravagant October keynote, Mark Zuckerberg, Facebook CEO, appeared in a cartoon avatar to inform us that one day, our interactions with friends and colleagues, as well as our leisure time, will not be limited to 2D social media platforms such Instagram or Facebook. We will instead live in a 3D virtual universe with infinite possibilities. This is the mission of Facebook—now Meta—wouldZuckerberg stated that the goal was to create such a world.

When I went to Paris in November to speak with Frances Haugen, these were my thoughts: Will Facebook be able to rid itself of its toxic image? Are people willing to let go of all the revelations from the last five year? What is more important: Can people trust Zuckerberg’s ability to create a virtual reality that is safe for all its users?

Haugen is a former employee of Facebook.Leaked thousands of pages from company documentsThis fall, to U.S. authorities as well as the media. The documents showed that Facebook—which renamed itself in the wake of the revelations—knew far more about the harms of its products, Particularly Facebook, InstagramIt’s more than the public ever believed.

When I asked her about the metaverse, Haugen’s answer focused on the extra forms of surveillance that would be necessary for any meaningful kind of metaverse experience. “I am worried that if companies become metaverse companies, individuals won’t get to consent anymore on whether or not to have Facebook’s sensors—their microphones in their homes,” Haugen told me. “This company—which has already shown it lies to us whenever it’s in its own interests—we’re supposed to put cameras and microphones for them in our homes?” she said.

I also asked Haugen: what kinds of safety risks don’t exist today, but might exist in a future where we live parts of our lives in the metaverse? “I’m super scared,” she said. After that, she began to experiment with thoughts:

“So, just imagine this with me. Your avatar will be a bit more attractive and beautiful than you when you enter the metaverse. In reality, you have more clothes than us. You feel more relaxed and stylish in your apartment. You take off your headphones and go to brushing your teeth after a long night. And maybe you just don’t like yourself in the mirror as much. That cycle… I’m super worried that people are going to look at their apartment, which isn’t as nice, and look at their face or their body, which isn’t as nice, and say: ‘I would rather have my headset on.’ And I haven’t heard Facebook articulate any plan on what to do about that.”

Haugen’s answer vividly took me back to one of the documents that made up part of her whistleblower disclosures. It was all about the effect of Insta about the mental health and well-being of teensTeenage girls are especially vulnerable. Facebook’s own researchers had run some surveys and found, among other shocking statistics, that of teenage girls who said they experienced bad feelings about their bodies, 32% said Instagram made them feel worse. Here are some shocking statistics: 13% and 6% respectively of American users reported that teens had expressed suicidal thoughts. Wall Street Journal) showed.

Facebook stated that its research shows that social media use can have a positive and negative impact on mental health. It is also experimenting with ways to make Instagram more secure for those who are most vulnerable. On Dec. 7, Instagram said it would begin “nudging teens toward different topics if they’ve been dwelling on one topic for a long time,” and would soon be launching new tools for parents and guardians to “get them more involved” in what their teenagers do on the platform.

Although the revelations were made two months ago, it’s not known to what extent Facebook is willing to acknowledge that their platforms have caused real-world harms. “The individual humans are the ones who choose to believe or not believe a thing; they are the ones who choose to share or not share a thing,” said Andrew Bosworth, the Meta executive currently responsible for augmented and virtual reality in an interview with Axios on HBOThis aired Sunday.

If the company acknowledges that it has contributed to harm, the company is deemed “integral”. SolutionsThey are usually partial or retrospective. The public should trust Zuckerberg to do different things with the version of its metaverse. I asked Haugen whether she trusted Zuckerberg when he said, in his keynote, that he would build safety into Facebook’s metaverse from the beginning.

“I think the question is not whether or not I believe him or not,” Haugen said. “I believe any technology with that much influence deserves oversight from the public.”

This message cuts right to the core of technology’s systemic problem. Technologist build Future. Democratic and civic institutions take time to step in and set limits about what is acceptable in that new world—including protections for vulnerable people, safeguards against misinformation and polarization and restrictions on monopolistic power. Facebook jettisoned its controversial motto “move fast and break things” in 2014, but the phrase still neatly encapsulates how the company—and the wider industry—works when introducing new tech to the world.

Haugen offers some suggestions for the metaverse, which could allow regulators to weigh the benefits of technological innovation against the potential harms that can be caused by societal change. “At a minimum, they should have to tell us what the real harms are,” Haugen says, referring to obligations she would like to see regulators set on Facebook. “They should also have to listen to us when we articulate harms. And we should have a process where we can have a conversation in a structured way.”

Regulation is one aspect of the problem. The more complex problem of prioritization is another. It can only be determined at the highest levels of management, including investment decisions and corporate culture. Facebook claims that its top priority is fixing the platform. This has been true for years.

But in his keynote, Zuckerberg said that Facebook’s number one priority was now building the metaverse. He didn’t outwardly articulate that safety had been downgraded, but you only have to look at the numbers to see where the problem lies. Recently, Facebook has been rebutting journalists’ questions with the statistic that it now spends $5 billion per year on keeping its platforms safe. Facebook announced that October was its tenth anniversary. The company said it planned to spend $10 billion on the Metaverse in 2021. It also stated that this number would continue growing in future years.

Do the math.

Subscribe to Into the Metaverse to receive a weekly update on the Internet’s future.

Sign up for TIMEPieces TwitterDiscord and Harmony